Progress in multimedia analysis

CATALOGUS

The same point also helps in full text retrieval. When texts are included as

counter examples in the query, the computer may be able to determine the

proper response much more quickly.

For query by example, a distinction should be made between external examples

brought in by the user and internal examples where the user has selected an item

from the database. When the example is external, in practice the query example

is not annotated, so the system can only search for similar items on the basis of

the content descriptors described above. When the example is internal,

similarity can also be based on the annotations of the items.

In practice the user will not get the answer directly from one of the above query

types, but will engage in an interactive session with the system where advanced

visualization and relevance feedback from the user are iteratively used to bring

the user closer to the desired information. Ideally, the system is actively

participating in finding the best solution by posing the most informative

questions or showing the most informative results to the user.

Figure 3. Example of an advanced visualization tool where the user gives

feedback to the system by indicating relevant and non-relevant items.

Interactivity poses heavy demands on the computing, storage, and display

capacity of the system. Users want immediate feedback on their queries, but this

might require computing a large set of relevant descriptors if external examples

are used, and then requires comparing the descriptors of all elements in the

dataset with the query. Advanced database techniques are required to limit the

search. In addition, interactive search stretches the functionality of the

presentation devices to the limit. Nevertheless, interactivity compensates for the

inability of the computer to take account of context. In a full interaction

scheme, not only the query may be modified but also what is to be considered

similar, and what are to be considered good examples and counter examples. By

using relevance feedback and visual presentation of the best results (see the

figure), current content-based retrieval systems only scratch the surface of what

is to be expected in the near future.

102

ARNOLD W.M SMEULDERS, FRANCISKA DE JONG AND MARCEL WORRING MULTIMEDIA INFORMATION

TECHNOLOGY AND THE ANNOTATION OF VIDEO

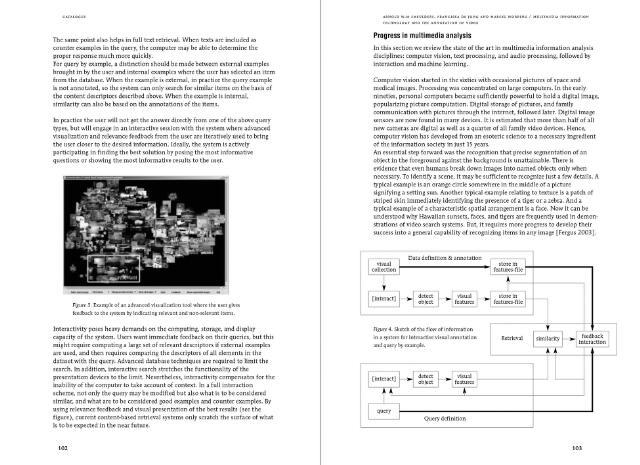

In this section we review the state of the art in multimedia information analysis

disciplines: computer vision, text processing, and audio processing, followed by

interaction and machine learning.

Computer vision started in the sixties with occasional pictures of space and

medical images. Processing was concentrated on large computers. In the early

nineties, personal computers became sufficiently powerful to hold a digital image,

popularizing picture computation. Digital storage of pictures, and family

communication with pictures through the internet, followed later. Digital image

sensors are now found in many devices. It is estimated that more than half of all

new cameras are digital as well as a quarter of all family video devices. Hence,

computer vision has developed from an esoteric science to a necessary ingredient

of the information society in just 15 years.

An essential step forward was the recognition that precise segmentation of an

object in the foreground against the background is unattainable. There is

evidence that even humans break down images into named objects only when

necessary. To identify a scene, it may be sufficient to recognize just a few details. A

typical example is an orange circle somewhere in the middle of a picture

signifying a setting sun. Another typical example relating to texture is a patch of

striped skin immediately identifying the presence of a tiger or a zebra. And a

typical example of a characteristic spatial arrangement is a face. Now it can be

understood why Hawaiian sunsets, faces, and tigers are frequently used in demon

strations of video search systems. But, it requires more progress to develop their

success into a general capability of recognizing items in any image [Fergus 2003],

visual

collection

[interact]

Data definition annotation

store in

features-file

detect

visual

store in

object

features

features-file

Figure 4. Sketch of the flow of information

in a system for interactive visual annotation

and query by example.

similarity

feedback

interaction

Retrieval

[interact]

detect

visual

object

features

query

Query definition

103